|

We are certainly did not blaze any trails here, just leveraging the same pattern that others had done! This is what makes the open source community so amazing. This will be changing in the near future we we better our alerting/alarm strategy, but it works for us now. For us an alert is just a MS Teams message that we all monitor. Now that we have the visualizations in place we can leverage Grafana alerting to tell us when key items are amiss. You can see an example of this dashboard at the top of this article. The http exporter uses the low-level Elasticsearch REST Client, which enables it to send its data to any Elasticsearch cluster it can access through the network. Thankfully, the open source community strikes again! There are quite a few Elasticsearch dashboards on the Grafana Dashboard Page, but we ended up settling on the one that was built for Elastic Exporter. Now that we have all the metrics we wanted, it was time to build a dashboard. bin/elasticsearch_exporter - es.uri= - es.all Visualization To monitor the metrics of elasticsearch there is an exporter available elasticsearchexporter.

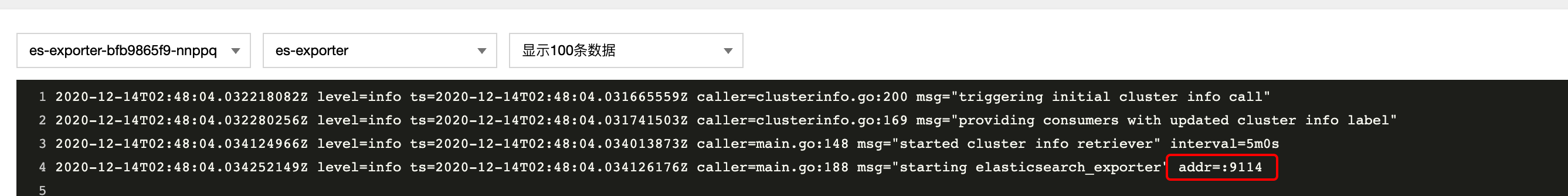

We ended up just running with the default settings for everything and just defined the es.uri and es.all settings via the Entrypoint setting. While there are lots of configuration items that possible with the Elastic Exporter, which are all defined in the README.md. Add your Elasticsearch exporter to the exporters list of a logs pipeline. For us, we just deployed the container like any other container we do in our environment, though our own HELM charts that the DevSecOps team maintains. The Elastic Exporter team also maintains a HELM chart if you want to go that route as well. Deployment and Configĭeployment of the elastic exporter couldn’t be simpler! There are a couple of docker containers out there already, we just leveraged one of those and deployed it via Kubernetes. For those of you that have read my other articles, you will know that we are moving away from the Elastic Stack in favor of Loki.While we are nearing the end of that transition, we still needed to monitor our Elasticsearch instances. Thankfully someone else had tackled this problem before us and published their work through the prometheus project, Elastic Exporter.

Now that we had a pattern down, it was time to find a good monitoring solution. We also wanted to keep our monitoring to a single pane of glass which is Grafana for us. For deployments with Elastic Stack version 7. For details, check our guidelines for Amazon Web Services (AWS) Storage, Google Cloud Storage (GCS), or Azure Blob Storage. The main impact exporters have on a Zeebe cluster is that they. From the Elasticsearch Service Console of the new Elasticsearch cluster, add the snapshot repository. We do our best to standardize on a single pattern for all of our environments which includes observability. Find a reference implementation in the form of the Zeebe-maintained Elasticsearch exporter. Development and QA are in our local data center so we didn’t have the same functionality that AWS provided.

We are utilizing OpenSearch in AWS for our production, and while AWS has a whole slew of monitoring and alarm capabilities, all for a fee of course, not all of our Elasticsearch instances are in AWS. While we are nearing the end of that transition, we still needed to monitor our Elasticsearch instances. When you declare a dependency on one of these artifacts without declaring a version, the version listed in the table is used.For those of you that have read my other articles, you will know that we are moving away from the Elastic Stack in favor of Loki. The following table provides details of all of the dependency versions that are provided by Spring Boot in its CLI (Command Line Interface), Maven dependency management, and Gradle plugin.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed